T'was The Day before genesis, when all was ready Geth Was In my beacon sync Node paired. Firewalls configured, VLANs galore, Hours You should not ignore the importance of preparation.

Then All All of a suddenly, everything went wrong The SSD In My system died. My The The days of configurations are gone, and the age of chain data is gone. Nothing To Do But Trust In next day delivery.

I started to think about redundancies, and backups. Complicated My Systemic systems enslaved fantasies Thinking FurtherI was stunned to discover: It It was foolish not to worry about such failures.

Events

The Beacon To encourage validator behavior, chain employs several mechanisms. All These depend on the network’s current state. It It is important to consider failure cases within the context of other validators before deciding what they should be. This. They They aren’t, however they can provide valuable protection for your nodes.

As an active validator, your balance goes up or down, it never drifts*. So Minimizing Profits can be maximized by eliminating your negatives. There There There are three ways beacon chain can lower your balance

- Sanctions They You will be thrown if the validator has lost a function (for example, it is offline).

- Leakage From inactive sources They They are presented as a gift for validators who lose their functions if the network is not terminated. Your Validator’s offline status can be highly correlated with other offline validators.

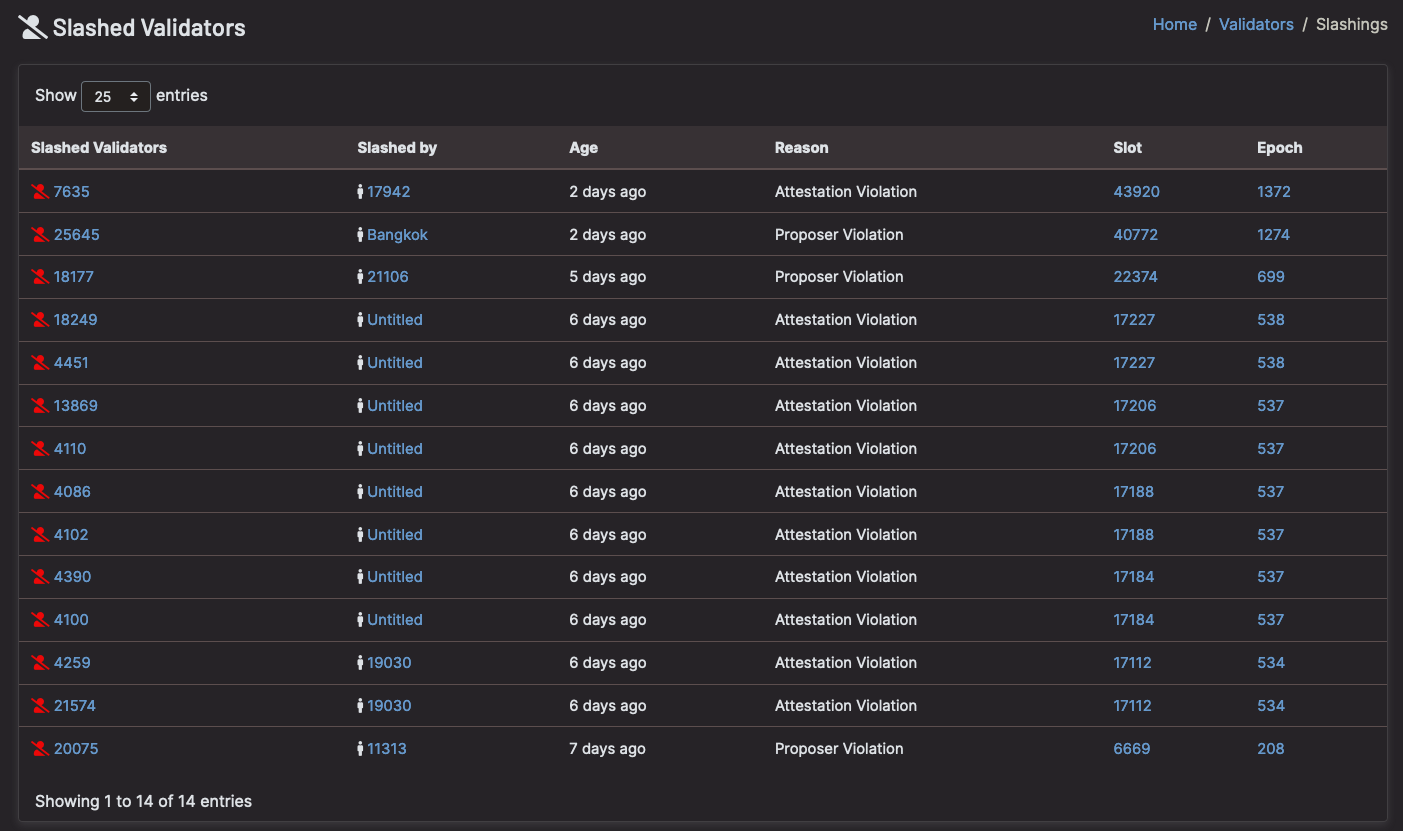

- Slices Validators Notifications were sent to them stating that they had produced contradictory blocks and certifications. These Could be used to attack

* On AverageA validator’s total amount might not change, but they will be rewarded or punished for every task.

Correlation

The The The effect of one validator going offline or engaging in clipping behavior is minimal for the safety and overall health of the beacon chain. ThereForeYou They are not subject to severe punishment. InverselyIf Many validators are unavailable, so the balance could drop even faster.

Similar to the beacon chain, this is not distinguishable from an attack if multiple validators simultaneously execute slash action. ThereForeIt Treat it as such and all shares of the offending validators will be burned.

Due These These are the best “anti-correlation” Incentives Validators and other factors should be taken into consideration plus It It is about failings that could affect many people at the same time, as opposed to one.

Causes They They are also possible.

So Let’s Take a look at some failure cases. ThenLet’s now see how many people would be affected, and how severe their validators would be punished.

@econoar isn’t right for me here These Are Worst All cases problems. These Moderate To resolve these issues. Home UPS Dual Failures in WAN addresses are not correlated to users.

🌍 Internet/Power failure

If If If you’re validating at home, it’s likely that you’ll make another one in the future. Residential Internet The It is impossible to guarantee the availability of internet and power connections. HoweverIf The internet may go down, but it is usually not a major problem for your area.

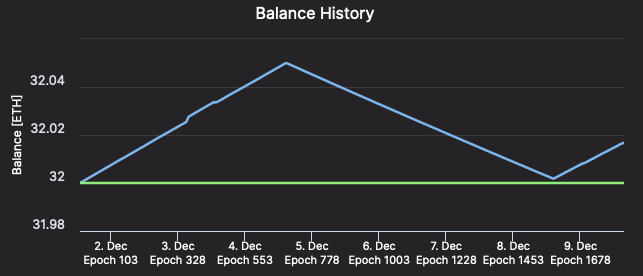

You Without it, you won’t be able to do it. Very Spotty It A failed connection may not be worth the cost. You You For a brief time you will be penalized, but all the network’s operations will continue as normal. In other words, your penalties will be roughly the same as what you would have received for the same period. In In It is also known as a What One Hours of failure can return your validator balance to roughly where it was before. What Hours Before the failure, and after What After After additional hours, your validator will return the pre-failure amount.

[Validator #12661 regaining ETH as quickly as it was lost – Beaconcha.in

🛠 Hardware failure

Like internet failure, hardware failure strikes randomly, and when it does, your node might be down for a few days. It is valuable to consider the expected rewards over the lifetime of the validator versus the cost of redundant hardware. Is the expected value of the failure (the offline penalties times the chance of it happening) greater than the cost of the redundant hardware?

Personally, the chance of failure is low enough and the cost of fully redundant hardware high enough, that it almost certainly isn’t worth it. But then again, I am not a whale 🐳 ; as with any failure scenario, you need to evaluate how this applies to your particular situation.

☁️ Cloud services failure

Maybe, to avoid the risks of hardware or internet failure altogether, you decide to go with a cloud provider. With a cloud provider, you have introduced the risk of correlated failures. The question that matters is, how many other validators are using the same cloud provider as you?

A week before genesis, Amazon AWS had a prolonged outage which affected a large portion of the web. If something similar were to happen now, enough validators would go offline at the same time that the inactivity penalties would kick in.

Even worse, if a cloud provider were to duplicate the VM running your node and accidentally leave the old and the new node running at the same time, you could be slashed (the penalties incurred would be especially bad if this accidental duplication affected many other nodes too).

If you are insistent on relying on a cloud provider, consider switching to a smaller provider. It may end up saving you a lot of ETH.

🥩 Staking Services

There are several staking services on mainnet today with varying degrees of decentralisation, but they all contain an increased risk of correlated failures if you trust them with your ETH. These services are necessary components of the eth2 ecosystem, especially for those with less than 32 ETH or without the technical know-how to stake, but they are architected by humans and therefore imperfect.

If staking pools eventually grow to be as large as eth1 mining pools, then it is conceivable that a bug could cause mass slashings or inactivity penalties for their members.

🔗 Infura Failure

Last month Infura went down for 6 hours causing outages across the Ethereum ecosystem; it is easy to see how this is likely to result in correlated failures for eth2 validators.

In addition, 3rd party eth1 API providers necessarily rate-limit calls to their service: In the past this has caused validators to be unable to produce valid blocks (on the Medalla testnet).

The best solution is to run your own eth1 node: you won’t encounter rate-limiting, it will reduce the likelihood of your failures being correlated, and it will improve the decentralisation of the network as a whole.

Eth2 clients have also started adding the possibility of specifying multiple eth1 nodes. This makes it easy to switch to a backup endpoint, in the event your primary endpoint fails (Lighthouse: –eth1-endpoints, Prysm: PR#8062, Nimbus & Teku will likely add support somewhere in the future).

I highly recommend adding backup API options as cheap/free insurance (EthereumNodes.com shows the free and paid API endpoints and their current status). This is useful whether you are running your own eth1 node or not.

🦏 Failure of a particular eth2 client

Despite all the code review, audits, and rockstar work, all of the eth2 clients have bugs hiding somewhere. Most of them are minor and will be caught before they present a major problem in production, but there is always the chance that the client you choose will go offline or cause you to be slashed. If this were to happen, you would not want to be running a client with > 1/3 of the nodes on the network.

You must strike a tradeoff between what you deem to be the best client vs how popular that client is. Consider reading through the documentation of another client so that if something happens to your node, you know what to expect in terms of installing and configuring a different client.

If you have lots of ETH at stake, it is probably worth running multiple clients each with some of your ETH to avoid putting all your eggs in one basket. Otherwise, Vouch is an interesting offering for multi-node staking infrastructure, and Secret Shared Validators are seeing rapid development.

🦢 Black swans

There are of course many unlikely, unpredictable, yet dangerous scenarios that will always present a risk. Scenarios that lie outside the obvious decisions about your staking set-up. Examples such as Spectre and Meltdown at the hardware level, or kernel bugs such as BleedingTooth hint at some of the hazards that exist across the entire hardware stack. By definition, it is not possible to entirely predict and avoid these problems, instead you generally must react after the fact.

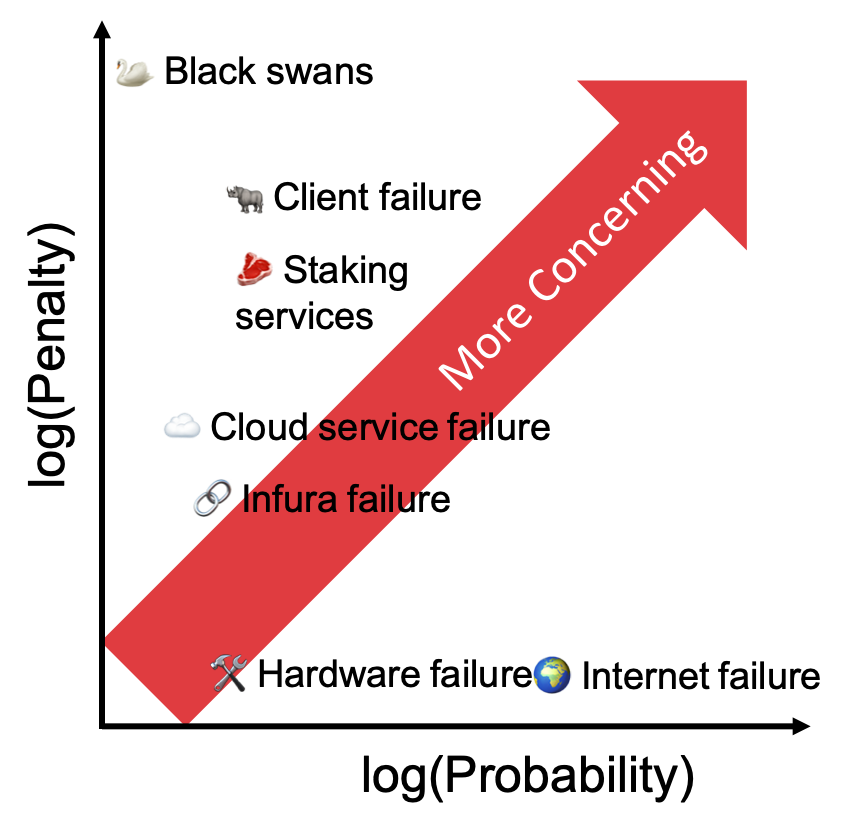

What to worry about

Ultimately this comes down to calculating the expected value E(X) of a given failure: how likely an event is to happen, and what the penalties would be if it did. It is vital to consider these failures in the context of the rest of the eth2 network since the correlation greatly affects the penalties at hand. Comparing the expected cost of a failure to the cost of mitigating it will give you the rational answer as to whether it is worth getting in front of.

No one knows all the ways a node can fail, nor how likely each failure is, but by making individual estimates of the chances of each failure type and mitigating the biggest risks, the “wisdom of the crowd” will prevail and on average the network as a whole will make a good estimate. Furthermore, because of the different risks each validator faces, and the differing estimates of those risks, the failures you did not account for will be caught by others and therefore the degree of correlation will be reduced. Yay decentralisation!

📕 DON’T PANIC

Finally, if something does happen to your node, don’t panic! Even during inactivity leaks, penalties are small on short time scales. Take a few moments to think through what happened and why. Then make a plan of action to fix the problem. Then take a deep breath before you proceed. An extra 5 minutes of penalties is preferable to being slashed because you did something ill-advised in a rush.

Most of all: 🚨 Do not run 2 nodes with the same validator keys! 🚨

Thanks Danny Ryan, Joseph Schweitzer, and Sacha Yves Saint-Leger for review

[Slashings because validators ran >1 node – Beaconcha.in]